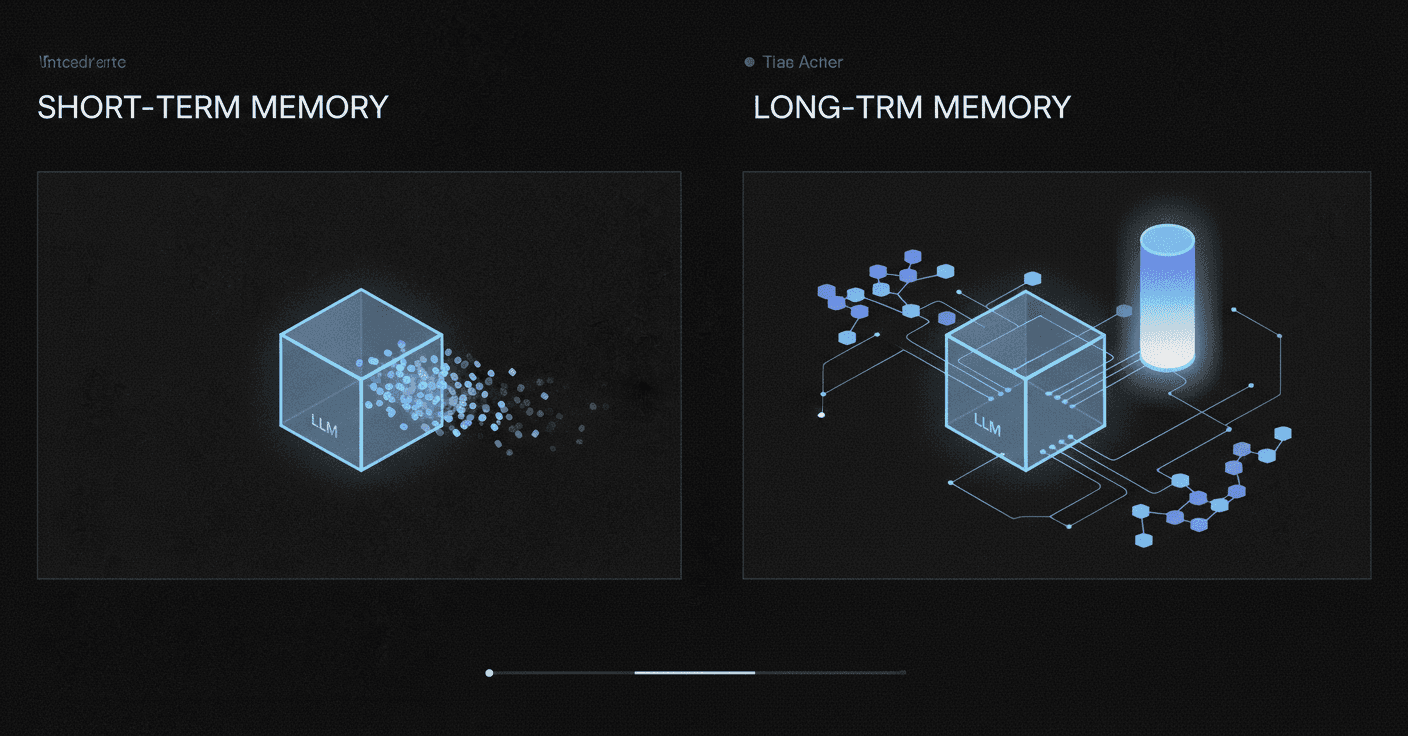

Short-Term vs Long-Term Memory in LLM Applications

Short-term memory operates within an LLM's context window (typically a few thousand tokens), while long-term memory persists across sessions using external storage systems like vector databases or knowledge graphs. Production implementations show 95.4% overall accuracy with specialized memory architectures, compared to 30% accuracy loss when facts drift beyond the context window in standard LLMs.

TLDR

Short-term memory is limited to the model's context window and disappears after each session, while long-term memory persists indefinitely through external databases

Modern memory architectures achieve 95.4% accuracy on LongMemEval benchmarks, with some systems reaching 99% single-session recall

Production memory systems require hybrid retrieval combining vector similarity with full-text search, type weighting, and temporal decay mechanisms

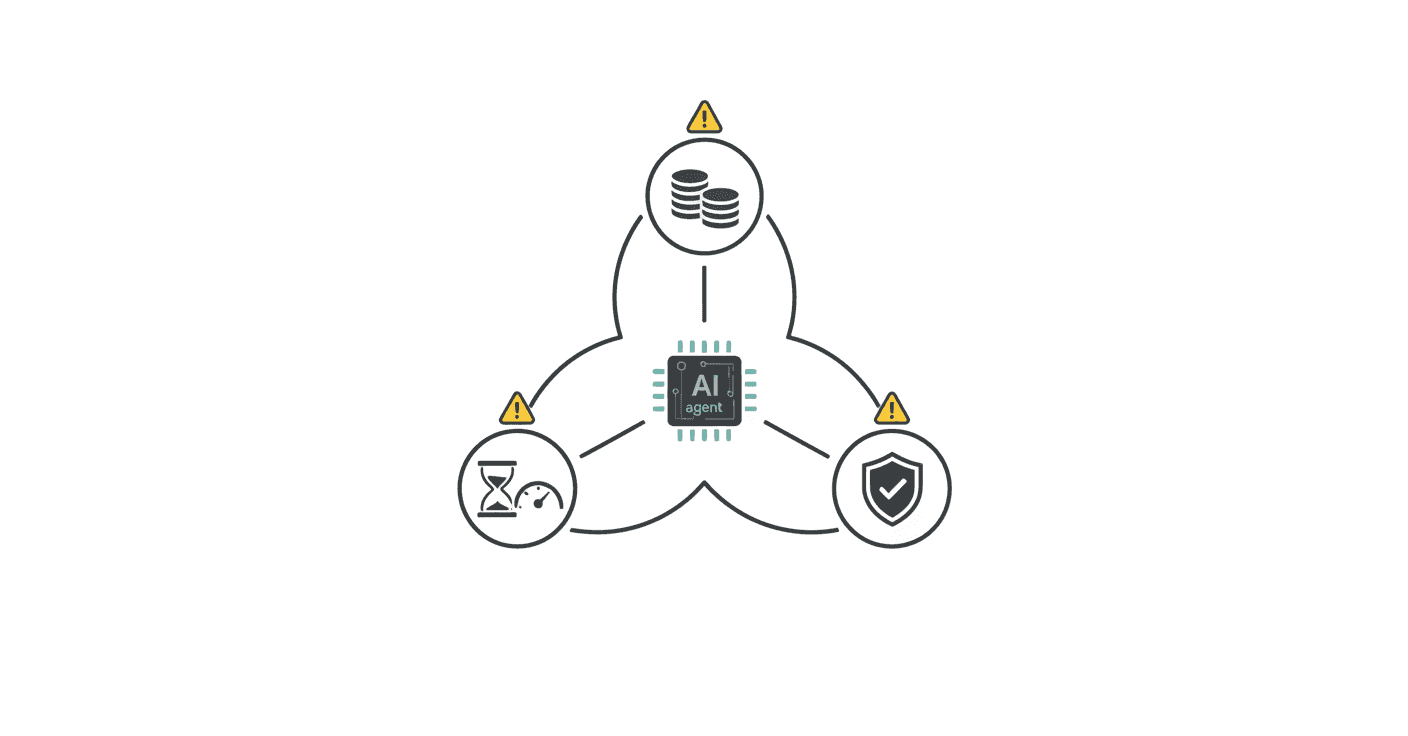

Cost considerations are critical: processing a million tokens per query can cost $10-20 in API fees without proper optimization

Five core memory capabilities define effective LLM memory: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention

Modern LLM agents break the moment knowledge drifts outside the context window. For engineering leaders shipping production AI, the debate over short-term vs long-term memory in LLMs is no longer academic. It is a production-blocking issue that determines whether an agent can remember a user's preferences from last week or forgets them mid-conversation.

This post unpacks how short-term and long-term memory differ, where each fails, and what architectural patterns are emerging to solve persistent context at scale.

Why Does Memory Suddenly Matter for LLM Agents?

Recent LLM-driven chat assistant systems have integrated memory components to track user-assistant chat histories, enabling more accurate and personalized responses. Memory is no longer a nice-to-have; it is the difference between a stateless tool and an adaptive assistant.

A new memory architecture that solves long-term forgetting in LLMs is now "delivering state-of-the-art performance on LongMemEval by enabling reliable recall, temporal reasoning, and knowledge updates at scale," according to Supermemory research.

Yet most discussions of production agents focus heavily on context window size. That framing is incomplete. As one analysis notes, "the core challenge is not eliminating context limits, but designing systems whose correctness and performance do not depend on fitting all relevant data into the model at once." A proof-of-concept agent can survive on a narrow prompt and a handful of examples. A production agent must operate over arbitrary, noisy, real-world data at substantial scale while remaining fast, predictable, and correct.

Memory is now a first-class concern for any team building AI agents that need to learn, personalize, or reason across sessions.

What's the Difference Between Short-Term and Long-Term Memory—and Where Do They Fail?

Short-term memory (STM) lives inside the model's context window: the last few thousand tokens the LLM can see on a single call. Long-term memory (LTM) sits outside that window and persists across calls or sessions.

Large language model agents face fundamental limitations in long-horizon reasoning due to finite context windows, making effective memory management critical. Existing methods typically handle LTM and STM as separate components, relying on heuristics or auxiliary controllers, which limits adaptability and end-to-end optimization.

The consequences are measurable. LongMemEval-s (ICLR 2025) shows assistants lose roughly 30% accuracy once facts drift beyond STM. Architectures such as Continuum Memory persist timelines and user preferences for weeks, enabling temporal reasoning impossible with STM alone.

Where do both fail?

STM saturation: Once the context window fills, older facts vanish. Position bias means LLMs struggle when relevant information is buried in the middle of a document.

LTM fragmentation: Classic retrieval-augmented generation (RAG) treats memory as a stateless lookup table: information persists indefinitely, retrieval is read-only, and temporal continuity is absent. Links vanish, knowledge goes stale, and agents cannot disambiguate what changed versus what remained constant.

All-or-nothing traps: Most existing systems adopt an "all-or-nothing" approach to memory usage. Incorporating all relevant past information can lead to Memory Anchoring, where the agent is trapped by past interactions, while excluding memory entirely results in under-utilization and the loss of important interaction history.

Key takeaway: Without explicit mechanisms for temporal chaining, versioning, and selective retention, both STM and LTM degrade under production load.

Architectural Paths to Persistent Context

Survey the leading designs that push beyond naïve RAG.

Baseline: RAG as Stateless Lookup

Retrieval-augmented generation has become the default strategy for providing LLM agents with contextual knowledge. But RAG treats memory as a stateless lookup table: retrieval is read-only, temporal continuity is absent, and there is no write-back or versioning.

Common pitfalls with baseline RAG:

Wrong embeddings and chunking that destroy context

Vector databases that buckle under production load

Cost and latency that compound fast. Processing a million tokens per query gets expensive. At current rates, a single query could cost $10-20 in API fees.

Tool-augmented configurations can improve accuracy (e.g., 47.5% to 67.5% for GPT-4o) while increasing latency by orders of magnitude (approximately 8s to 317s per example).

Continuum & Graph-Based Systems

A new class of architectures addresses the limitations of stateless RAG by treating memory as a first-class, stateful primitive.

Platforms like Cortex already combine vector retrieval with versioned knowledge graphs, preserving chronology while keeping latency under 200 ms. This approach enables production agents to reason across sessions without the fragmentation that breaks traditional RAG systems.

Architecture | Core Mechanism | Benchmark Performance |

|---|

Continuum Memory Architecture (CMA) | Persistent storage, selective retention, associative routing, temporal chaining, consolidation into higher-order abstractions | Necessary primitive for long-horizon agents |

MAGMA | Multi-graph agentic memory across semantic, temporal, causal, and entity dimensions; policy-guided traversal | Outperforms state-of-the-art on LoCoMo and LongMemEval |

Synapse | Dynamic graph with spreading activation, lateral inhibition, temporal decay; Triple Hybrid Retrieval | Significantly outperforms state-of-the-art in temporal and multi-hop reasoning |

These systems maintain and update internal state across interactions through mechanisms like persistent storage and temporal chaining. CMA is presented as a necessary architectural primitive for long-horizon agents, although challenges such as latency, drift, and interpretability remain.

How Do We Measure What an LLM Actually Remembers?

Objective evaluation of memory is hard. Context windows test recall; benchmarks like LongMemEval test memory.

LongMemEval is a comprehensive benchmark designed to evaluate five core long-term memory abilities of chat assistants: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention.

MemoryAgentBench provides comprehensive coverage of four core memory competencies: accurate retrieval, test-time learning, long-range understanding, and selective forgetting. Empirical results reveal that current methods fall short of mastering all four competencies, underscoring the need for further research.

Snapshot of State-of-the-Art Results

Current leaderboard numbers illustrate where the field stands:

System | LongMemEval Score | Notable Strength |

|---|

OMEGA | 95.4% overall | 99% single-session recall, 100% preference application |

Hindsight | 91.4% on LongMemEval | Lifts accuracy from 39% to 83.6% over full-context baseline |

Supermemory | State-of-the-art on LongMemEval-s | 71.43% multi-session, 76.69% temporal reasoning |

These results highlight that purpose-built memory architectures dramatically outperform both full-context baselines and naïve RAG approaches.

How Can Teams Build Memory-Aware Systems in 2026?

Actionable guidance for CTOs and engineers building memory-aware LLM applications:

Choose the right vector database for your scale. Vector databases power the retrieval layer in RAG workflows by storing document and query embeddings as high-dimensional vectors. Balance performance, cost, and features against your specific application requirements.

Measure what matters. Measure quality with groundedness, usefulness, coverage, p95 latency, and cost per answer—not just demos.

Start lean. One framework plus one retrieval layer plus a clear permission model beats a complex stack.

Optimize hybrid retrieval. Hybrid search (the integration of lexical and semantic retrieval) has become a cornerstone of modern information retrieval systems. A "weakest link" phenomenon exists where a weak path can substantially degrade overall accuracy, highlighting the need for path-wise quality assessment before fusion.

Adopt learned sparse representations. Seismic reaches sub-millisecond per-query latency on various sparse embeddings while maintaining high recall—one to two orders of magnitude faster than state-of-the-art inverted index-based solutions.

Consider recall mechanisms. Adding semantic recall to term match-based recall improved conversion by 3% in one online A/B test.

Key takeaway: Production memory systems require hybrid retrieval, careful latency budgeting, and continuous evaluation against real-world benchmarks.

Cost, Latency & Governance Pitfalls Nobody Warned You About

Memory at scale introduces hidden costs and regulatory traps:

Cost compounds fast. Processing a million tokens per query gets expensive. At current rates, a single query against a full knowledge base could cost $10-20 in API fees.

Regulatory compliance is non-negotiable. The EU AI Act's Article 14 mandates demonstrable human oversight for high-risk systems, with phased compliance beginning February 2025.

Latency trade-offs are real. Large language models are stateless by design. Every inference call starts cold, with no persistent recall of prior context, user history, or domain-specific knowledge. Vector databases solve this by converting unstructured data into high-dimensional embeddings and enabling semantic retrieval at millisecond latency. In benchmarks, Pinecone returned top-5 results at a p95 latency of 92ms; Weaviate Cloud returned at 118ms p95 under equivalent load.

Project cancellation rates are high. Gartner predicts over 40% of agentic AI projects will be canceled by 2027 due to cost overruns, unclear value, or inadequate risk controls. MIT research confirms that 95% of AI pilots fail to scale beyond proof-of-concept.

Key Takeaways for Shipping Memory-First LLM Apps

Memory is no longer optional for production AI agents. The gap between stateless LLMs and memory-aware systems is closing, but teams must choose architectures that support persistent storage, temporal reasoning, and efficient retrieval.

One API that orchestrates all layers (ACID + Vector + Facts + Graph) simplifies the path from prototype to production. Cortex provides persistent memory infrastructure for AI agents, combining ACID conversations, vector indexing, fact extraction, and graph databases into a unified platform.

"A new memory architecture that solves long-term forgetting in LLMs, delivering state-of-the-art performance on LongMemEval by enabling reliable recall, temporal reasoning, and knowledge updates at scale," is now within reach for teams willing to move beyond stateless RAG.

For teams building production-grade AI agents, the choice is clear: invest in memory infrastructure now, or watch agents break as knowledge drifts outside the context window.

Frequently Asked Questions

What is the difference between short-term and long-term memory in LLMs?

Short-term memory (STM) in LLMs refers to the information within the model's context window, typically the last few thousand tokens. Long-term memory (LTM) persists across sessions and is crucial for maintaining context over time. STM is limited by context window size, while LTM requires mechanisms for temporal continuity and versioning.

Why is memory important for LLM agents?

Memory is essential for LLM agents to provide accurate and personalized responses. It allows agents to remember user preferences and maintain context across sessions, transforming them from stateless tools into adaptive assistants. Without effective memory management, agents struggle with long-horizon reasoning and context retention.

What are the limitations of traditional retrieval-augmented generation (RAG) systems?

Traditional RAG systems treat memory as a stateless lookup table, lacking temporal continuity and versioning. This leads to issues like context fragmentation, outdated information, and inability to handle changes over time. These limitations hinder the adaptability and accuracy of LLM agents in production environments.

How does Cortex address memory challenges in LLM applications?

Cortex combines vector retrieval with versioned knowledge graphs to preserve chronology and enable reasoning across sessions. It integrates memory directly into the retrieval layer, supporting persistent memory, user-level personalization, and temporal reasoning, which are crucial for production-grade AI agents.

What are the cost and latency considerations for memory systems in LLMs?

Memory systems can introduce significant costs, especially when processing large volumes of data. Latency is also a concern, as large language models are stateless by design. Efficient retrieval mechanisms, like those provided by Cortex, help manage these challenges by enabling fast, accurate, and cost-effective memory operations.

Sources

https://omegamax.co/benchmarks

https://arxiv.org/abs/2410.10813

https://supermemory.ai/research

https://www.bolshchikov.com/p/deep-agents-at-scale

https://arxiv.org/abs/2601.01885

https://arxiv.org/abs/2601.09913

https://arxiv.org/abs/2601.05107

https://solvedbycode.ai/blog/complete-guide-rag-vector-databases-2026

https://arxiv.org/abs/2601.02663

https://hydradb.com/

https://arxiv.org/abs/2601.03236

https://arxiv.org/abs/2601.02744

https://openreview.net/pdf/2b14e3fecd25cd9511348c6a9ad470c2a2161634.pdf

https://arxiv.org/abs/2512.12818

https://research.aimultiple.com/vector-database-for-rag

https://aitoolsbusiness.com/ai-rag-for-business/

https://arxiv.org/abs/2508.01405

https://brlikhon.engineer/blog/building-production-agentic-ai-systems-in-2026-langgraph-vs-autogen-vs-crewai-complete-architecture-guide

https://finlyinsights.com/pinecone-and-vector/